Someone asked me at the Austin LangChain meetup: “What task list do you use for your agents?”

It’s a natural question. Most people think of an AI agent as a smarter macro — task list in, output out. I started there too. My early agent setup was basically a glorified cron job manager.

But after months of running a personal agent swarm — four agents handling everything from my morning briefing to code review to security audits — I’ve realized the task list model is a local maximum. It works. It’s just not where this ends up.

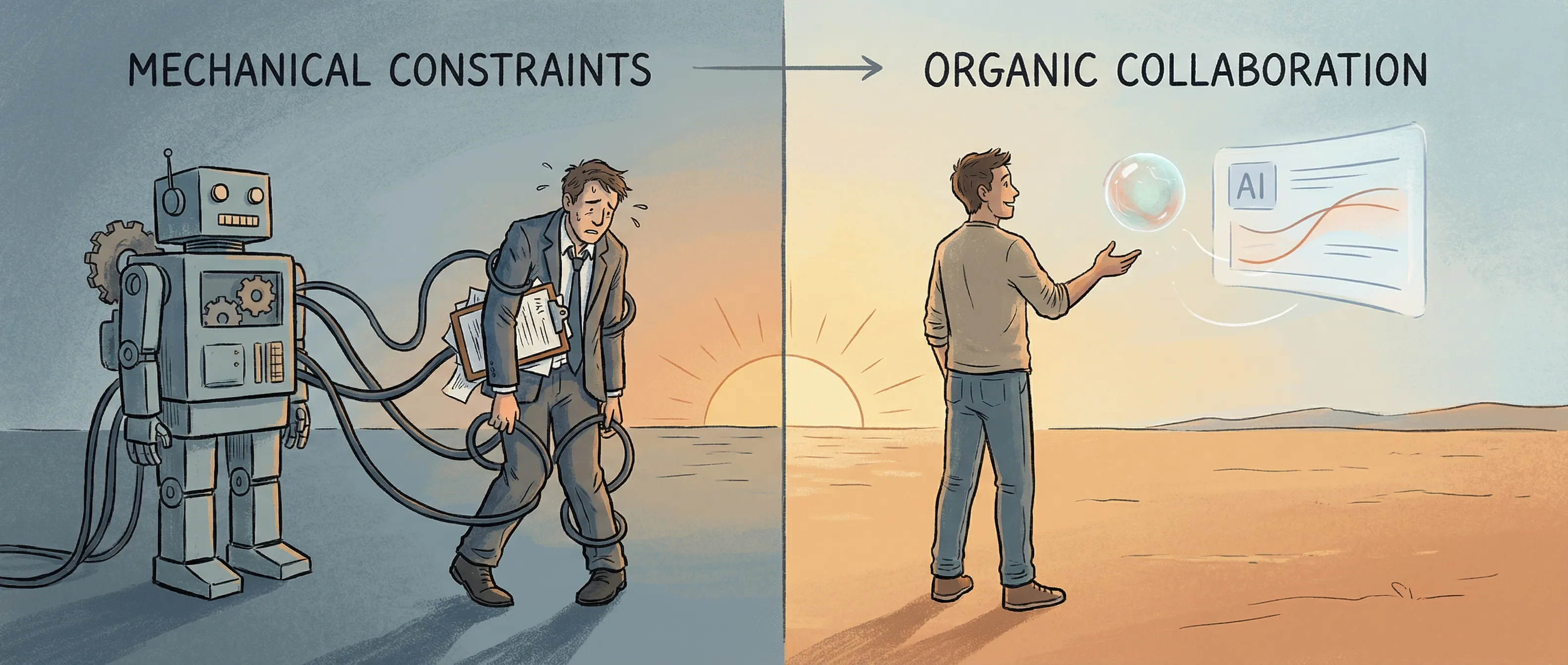

The Shift

The question “what task list do you use?” assumes the way you coordinate an agent is by specifying actions. Do this. Check that. Send this report. The problem is that a task list is only as good as your ability to anticipate what matters. And the most important things that need to happen are often the things you didn’t think to specify.

So I started writing less about what to do and more about why it matters. Instead of “check email every morning and summarize unread messages,” it became “help me stay on top of what needs my attention without drowning in noise.”

Harder to specify. Much better instruction. Now the agent has to think — weigh signal against noise, ask itself: does this actually need my human’s attention?

As I gave my agents more context — my values, priorities, working style, goals — they started behaving less like tools and more like colleagues. Not perfect ones, but recognizable ones.

My lead agent now has a SOUL.md file. Not a task list — a description of who he is, what he cares about, and how he thinks about tradeoffs. When something comes up that I didn’t anticipate, he doesn’t halt for instructions. He makes a judgment call.

Here’s what that looks like: a recruiter email arrived that matched my job criteria on paper — right level, right domain. A task-list agent would have surfaced it like any other match. But this agent remembered that I’d once noted the company had a culture I didn’t want. He flagged it: “This matches your criteria, but conflicts with your notes about their culture. Worth responding?”

That’s not a rule I wrote. That’s values in action.

What Anthropic Figured Out

Right when I was working through this, I read Anthropic’s Model Spec — the document describing the values baked into Claude. It crystallized what I’d been intuiting.

The most striking thing about it isn’t what’s in it. It’s what’s not. It’s not a rule list. There’s no “Claude will do X, Claude will not do Y.” There are hard constraints for truly dangerous things, but they’re the exception, not the model. Anthropic explicitly doesn’t want Claude following a rigid rulebook, because rigid rules fail in situations you didn’t anticipate.

Instead, the document explains why. It describes what it means for Claude to be helpful, ethical, and safe — and how to think about the tension when those things conflict.

The analogy that stuck with me: a good employee doesn’t just execute instructions. They understand the organization’s goals well enough to make good decisions in novel situations, push back when something seems wrong, and act with integrity when no one’s watching. That’s what Claude is meant to be. Not a compliant executor — an agent with genuine values.

And the priority hierarchy is telling: safety first, ethics second, Anthropic’s guidelines third, helpfulness last. Most people think helpfulness is the whole point. Anthropic put it at the bottom. That’s completely different from how most of us design agent systems.

What I’ve Learned Building This Way

Your biggest investment is context, not prompts. Writing a good SOUL.md, maintaining memory, giving your agent a coherent model of your goals — that’s where the leverage is. It costs more tokens and more maintenance, but the payoff is an agent that generalizes instead of breaking at the edges of its rules.

Rules are for bright lines, not everyday operation. You want hard constraints for high-stakes stuff. But if your entire agent is built on rules, you’ve already lost. Rules can’t cover everything. Values can generalize.

Your agent needs a way to handle conflicting goals. Any agent with real values will hit situations where they pull in different directions. Anthropic spends significant time on this in the model spec, and it’s not an accident — it’s the hard problem. Your agent needs a principled hierarchy, not just a flat list of instructions.

The Uncomfortable Part

If your agent has genuine values — values that can override your immediate instructions in service of your deeper goals — you’ve built something that can tell you no. That’s not a bug. But it requires a different relationship than most people expect from their tools. The task list model feels controllable. The values model is more powerful, but it asks you to trade some control for genuine judgment.

I’m still working this out. But the direction is clear: the agents I’d actually trust with important parts of my life aren’t ones that follow orders. They’re the ones that understand what I’m trying to accomplish well enough to help me get there — even when I haven’t told them what to do next.

That’s not a task list. That’s a relationship.

I run four AI agents locally using OpenClaw: one for strategy and research, one for personal and family life, one for engineering, and one for security and QA. If you’re building your own agent setup and want to compare notes, find me at the Austin LangChain meetup or reach out directly.

References

- Claude’s Model Spec — Anthropic’s published constitution for Claude: values hierarchy, philosophical approach, and the reasoning behind it.

- Constitutional AI: Harmlessness from AI Feedback — Anthropic’s foundational research on training models using constitutions rather than rule sets.

- Claude 4.5 Opus’ Soul Document — Simon Willison’s write-up on the extracted soul document from Claude 4.5 Opus.